In 2026, the AI landscape has fundamentally shifted. Over the past few years, Large Language Models (LLMs) have dominated enterprise conversations. They draft emails, summarize reports, generate code, and power intelligent chatbots. For many organizations, deploying an LLM felt like deploying “AI.”

But a new architectural layer has moved from experimentation to production scale: AI agents. Unlike LLMs that generate responses, AI agents plan, decide, take action, and iterate toward defined goals across business systems. They do not just answer questions; they execute workflows.

This shift is creating both opportunity and confusion. The terms “LLM” and “AI agent” are now frequently used interchangeably in boardrooms, vendor pitches, and strategy decks. But they are not the same thing.

This guide clearly explains what an LLM and an AI agent are, how each works, how they work together in modern enterprise architecture, when to use each, and how to design an AI strategy that delivers measurable ROI in 2026.

What is a Large Language Model (LLM)?

A Large Language Model (LLM) is a type of artificial intelligence trained on massive amounts of text data to understand, process, and generate human-like language.

Modern LLMs are built on transformer-based architectures and are capable of reasoning, summarizing, translating, coding, and answering complex queries with contextual awareness.

Popular Examples of Large Language Models in 2026

Some of the most widely used LLMs powering enterprise AI systems today include:

- OpenAI ChatGPT: Used for conversational AI, coding assistance, research, and enterprise copilots.

- Google Gemini: Integrated across Google Workspace, search, and enterprise AI tools.

- Anthropic Claude: Known for long-context processing and enterprise-grade reasoning.

These models are often embedded into SaaS platforms, automation tools, and enterprise software products.

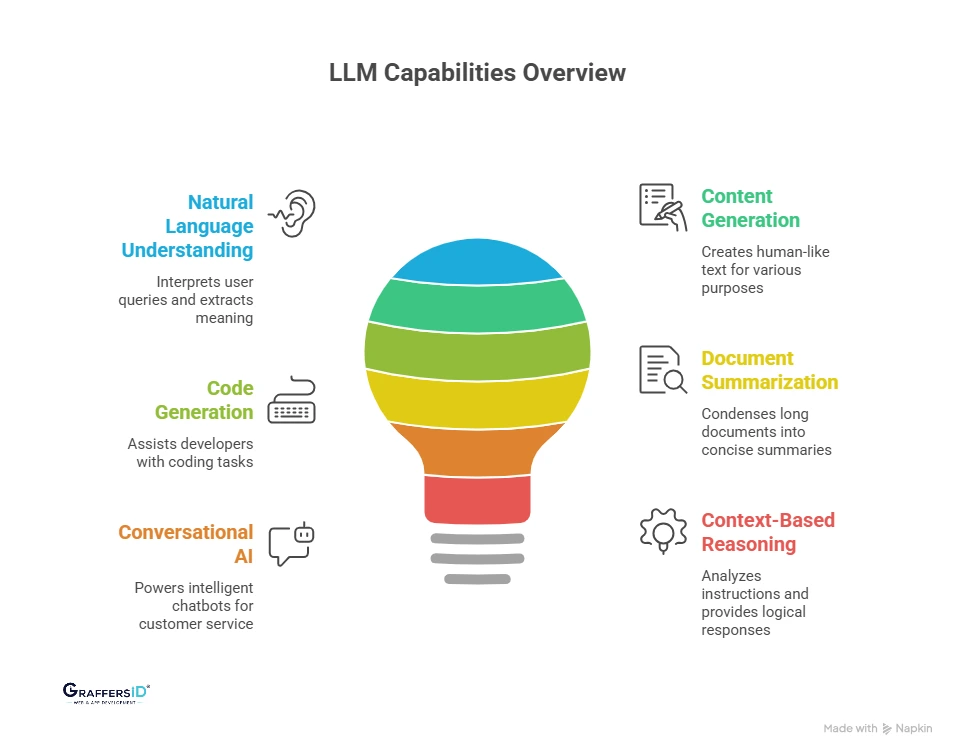

What Can a Large Language Model Do in 2026?

Large Language Models are best suited for language-driven intelligence tasks.

- Natural Language Understanding: LLMs can interpret user queries, analyze sentiment, extract meaning from documents, and answer questions contextually.

- Content and Text Generation: They generate blog posts, emails, reports, marketing copy, and documentation with human-like fluency.

- Code Generation and Technical Assistance: LLMs assist developers by writing code snippets, debugging, explaining logic, and accelerating software development.

- Document Summarization: They condense long reports, contracts, or meeting transcripts into structured summaries.

- Conversational AI: LLMs power intelligent chatbots that handle FAQs, onboarding flows, and internal knowledge queries.

- Context-Based Reasoning: Advanced LLMs can analyze multi-step instructions and provide structured responses based on logical interpretation.

Limitations of LLMs

LLMs Are Reactive Systems: Despite their intelligence, LLMs operate within a specific boundary:

- They respond to prompts.

- They generate outputs.

- They do not take independent actions.

An LLM cannot:

- Update a CRM by itself

- Trigger an automation workflow autonomously

- Execute multi-step tasks without an external system

Without additional architecture (such as an AI agent layer), an LLM remains a powerful language engine, but not an autonomous system.

What is an AI Agent?

An AI agent is a goal-driven AI system that can plan, decide, and take actions automatically to complete tasks.

Unlike a Large Language Model (LLM) that only generates text responses, an AI agent can interact with software systems, use tools, and execute multi-step workflows with minimal human input.

AI agents are the foundation of what is now called Agentic AI architecture in 2026.

How Does an AI Agent Work?

An AI agent works through a structured loop of reasoning and action. Instead of responding once to a prompt, it:

- Understands the goal

- Breaks it into tasks

- Uses tools to perform actions

- Evaluates results

- Adjusts strategy if needed

This continuous cycle enables autonomy.

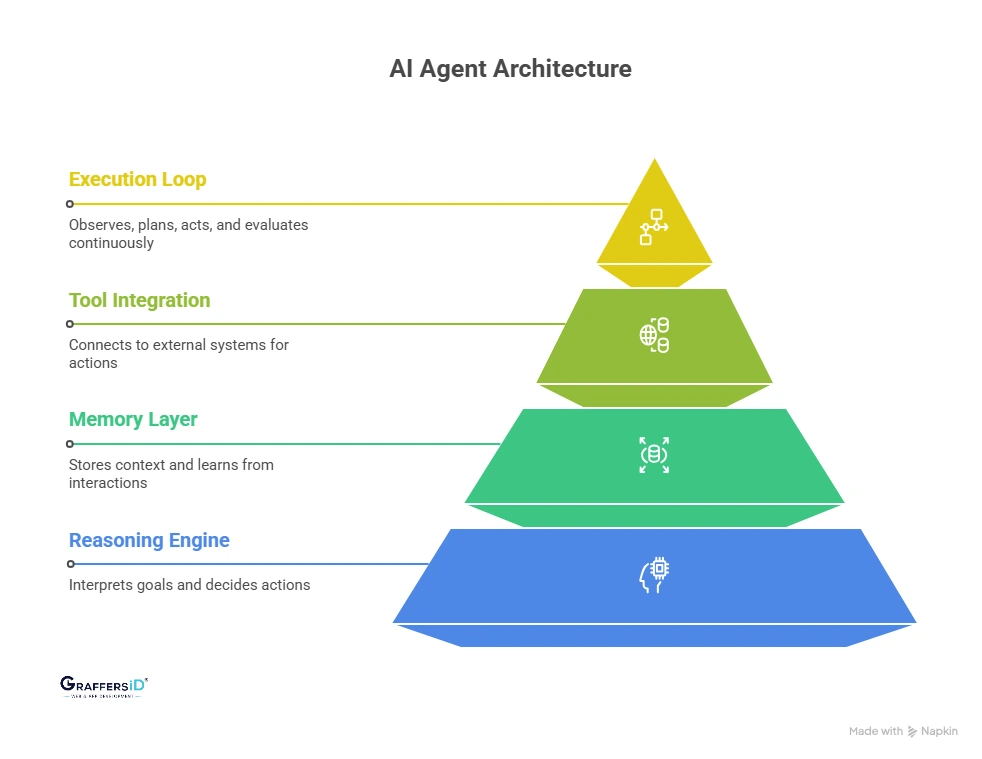

Core Components of an AI Agent Architecture in 2026

Most enterprise AI agents in 2026 are built with the following layers:

1. Reasoning Engine (LLM Layer)

This is the “thinking” component.

- Often powered by models like ChatGPT, Gemini, or Claude

- Interprets goals and instructions

- Breaks complex objectives into smaller tasks

- Decides the next best action

The LLM provides intelligence but not execution by itself.

2. Memory Layer (Context & Learning)

AI agents require memory to operate effectively across sessions.

Short-Term Memory

- Maintains context within a task

- Tracks recent actions and responses

Long-Term Memory

- Stores structured information

- Retains user preferences

- Learns from historical interactions

This allows agents to improve over time and maintain continuity.

Read More: What is AI Agent Memory? How to Build Intelligent, Memory-Based AI Agents in 2026?

3. Tool Integration Layer (Action Capability)

This layer connects the agent to external systems. It enables the agent to:

- Make API calls

- Update CRM systems

- Query databases

- Trigger automation workflows

- Send emails or notifications

- Interact with SaaS platforms

Without tool access, an AI system is not truly agentic.

4. Execution Loop (Autonomous Workflow Engine)

AI agents operate through a continuous loop:

- Observe the environment

- Plan the next action

- Act using tools

- Evaluate the outcome

- Repeat until the goal is achieved

This loop enables real autonomy instead of one-time responses.

What Can AI Agents Do?

AI agents are designed to go beyond generating text or answering prompts. In 2026, they function as autonomous digital workers capable of executing structured, goal-driven tasks across enterprise systems.

- Multi-Step Task Execution: AI agents can break a large objective into smaller tasks and complete them sequentially. Instead of stopping after one response, they continue working until the full goal is achieved.

- Cross-Platform Workflow Automation: AI agents connect with multiple tools such as CRMs, ERPs, databases, and SaaS platforms. They move data, trigger actions, and coordinate systems without manual intervention.

- Decision-Based Action Taking: AI agents evaluate options and choose the next best action based on context and predefined rules. This enables dynamic decision-making rather than fixed automation scripts.

- Real-Time Monitoring and Response: Agents can continuously monitor dashboards, logs, or KPIs and take immediate action when anomalies or threshold breaches occur.

- Goal-Oriented Reasoning: Unlike basic automation tools, AI agents operate around defined goals. They plan, execute, evaluate, and iterate until the objective is completed.

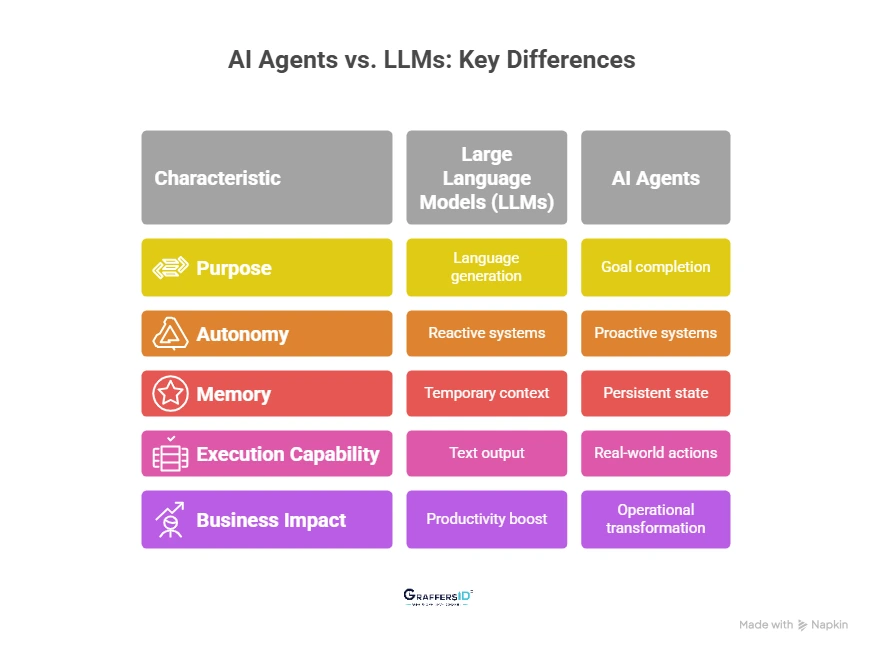

AI Agents vs. LLMs: What Is the Difference in 2026?

Below is a clear breakdown of how AI agents differ from Large Language Models in real-world enterprise use.

1. Purpose: Language Generation vs Goal Completion

Large Language Models (LLMs):

- Designed to understand and generate human-like text

- Best for answering questions, drafting content, summarizing documents, and generating code

- Primarily focused on language intelligence

AI Agents:

- Designed to achieve defined goals

- Break down complex objectives into multiple steps

- Focused on task completion and outcome delivery, not just conversation

2. Autonomy: Reactive vs Proactive Systems

Large Language Models (LLMs):

- Require human prompts to operate

- Do not initiate actions on their own

- Stop after producing a response

AI Agents:

- Operate autonomously once given a goal

- Decide the next steps based on feedback and context

- Continue working until the task is completed or conditions change

In enterprise settings, this difference determines whether AI supports employees or replaces entire workflows.

3. Memory: Temporary Context vs Persistent State

Large Language Models (LLMs):

- Limited to a defined context window

- Do not retain long-term memory unless externally engineered

- Cannot naturally track multi-session progress

AI Agents:

- Maintain structured memory (short-term and long-term)

- Store past interactions, task states, and outcomes

- Track progress across sessions and systems

This persistent memory enables AI agents to manage complex, multi-step business processes.

Read More: AI Agent vs. Chatbot: Key Differences, Use Cases & Future of Intelligent CX (2026)

4. Execution Capability: Text Output vs Real-World Actions

Large Language Models (LLMs):

- Generate text, code, or structured responses

- Cannot directly execute tasks without external integration

- Depend on humans or software layers to act on outputs

AI Agents:

- Call APIs and external tools

- Trigger workflows and automation platforms

- Update CRM or ERP systems

- Send emails and notifications

- Interact with enterprise software environments

This is the architectural leap that moves AI from “assistant” to “operator.”

5. Business Impact: Productivity Boost vs Operational Transformation

Large Language Models (LLMs):

- Improve individual productivity

- Reduce manual drafting and research time

- Enhance knowledge access across teams

AI Agents:

- Automate end-to-end workflows

- Reduce operational dependency on manual intervention

- Enable scalable, 24/7 intelligent systems

- Create measurable ROI through automation and efficiency gains

For enterprises in 2026, LLMs optimize tasks, and AI agents optimize operations.

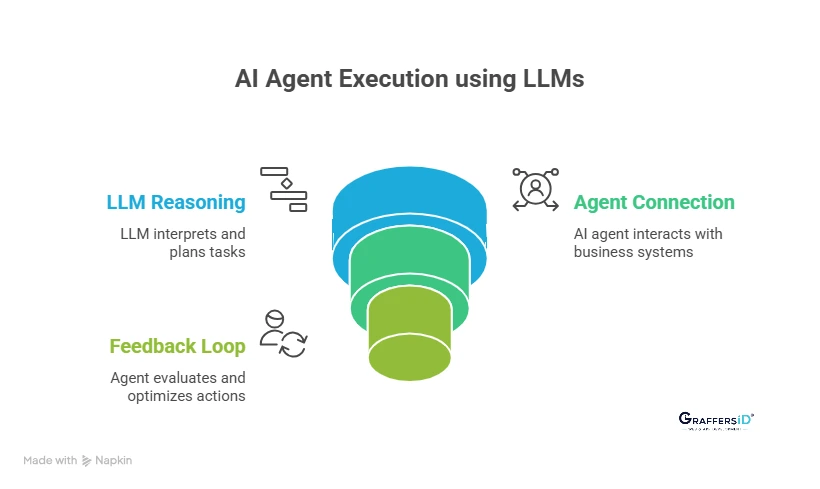

How Do AI Agents Use LLMs in Enterprise Systems in 2026?

In 2026, most production-ready AI systems follow a layered AI architecture where Large Language Models (LLMs) act as the reasoning engine, and AI agents manage execution.

This structure is commonly referred to as Agentic AI Architecture, a design pattern where intelligence (LLM) and action (agent layer) work together to complete business goals.

Step 1: LLM Handles Reasoning and Planning

At the core of every AI agent is an LLM that functions as the “cognitive engine.”

What the LLM Does:

- Interprets user intent: Converts natural language instructions into structured objectives.

- Breaks down complex tasks: Decomposes large goals into smaller, executable steps.

- Generates execution plans: Determines the logical sequence of actions required to complete the task.

Step 2: AI Agent Connects to Business Tools and Systems

Once the plan is created, the AI agent activates the execution layer.

What the Agent Does:

- Calls enterprise APIs: Interacts with CRM, ERP, HRMS, and analytics platforms.

- Triggers automation workflows: Uses tools like workflow engines, internal dashboards, and ticketing systems.

- Retrieves and updates structured data: Queries databases, modifies records, and pushes updates across systems.

This layer transforms AI from a text generator into an operational executor.

Step 3: Continuous Feedback and Self-Optimization

Modern AI agents do not operate in a single prompt-response cycle. They run in an iterative loop.

How the Feedback Loop Works:

- Evaluates outcomes: Checks whether the executed action achieved the intended goal.

- Adjusts strategy dynamically: Refines prompts, selects alternative tools, or modifies execution steps.

- Repeats until completion: Continues operating until predefined success criteria are met.

This feedback mechanism, known as Agentic AI Architecture, enables autonomous decision-making, a key differentiator between AI agents and standalone LLM systems.

When Should Businesses Use LLMs vs. AI Agents?

One of the most common enterprise search queries in 2026 is: “Should we use an LLM or an AI agent for our business?” The answer depends on whether you need intelligence generation or autonomous execution.

When Should Enterprises Use Large Language Models (LLMs)?

Use LLMs when your primary need is language understanding, content generation, or knowledge assistance, when you need AI to think and generate, not independently execute.

- AI-Powered Chatbots: Best for customer FAQs, internal helpdesks, and conversational interfaces where responses, not actions, are required.

- Document Automation: Ideal for generating reports, proposals, summaries, contracts, and internal documentation at scale.

- Code Generation Assistance: Useful for developer copilots that write boilerplate code, debug errors, or explain complex logic.

- Knowledge Retrieval Systems: Effective for searching internal documents, policies, or databases using natural language queries.

- AI Copilots for Teams: Supports marketing, HR, legal, and operations teams with drafting, brainstorming, and productivity enhancement.

When Should Enterprises Use AI Agents?

Use AI agents when your goal is automation, system integration, and task completion across workflows, when you need AI to act, decide, and complete workflows autonomously.

- End-to-End Business Automation: When tasks must move from input to final outcome without manual intervention (e.g., lead intake to CRM update to follow-up email).

- Cross-System Orchestration: When AI must interact with multiple tools, CRM, ERP, ticketing systems, and analytics platforms in one continuous process.

- Decision-Driven Execution: When the system must evaluate conditions, choose next steps, and adapt dynamically based on results.

- Multi-Step Task Completion: When workflows involve planning, execution, validation, and iteration until a defined goal is achieved.

AI Agents vs. LLMs: Best Enterprise AI Strategy in 2026

In 2026, the smartest enterprises are not choosing between LLMs and AI agents; they are combining them through a phased, low-risk adoption model. Here is a clear, execution-ready roadmap aligned with modern enterprise AI architecture trends.

1. Start with LLM Copilots

Begin by deploying LLM-powered copilots to improve productivity in areas like documentation, customer support, coding, and internal knowledge search. This builds internal AI familiarity while delivering quick, measurable efficiency gains.

2. Add Workflow Automation Layers

Once copilots are stable, connect them to structured workflows using APIs and automation tools. This shifts AI from passive assistance to task-level execution within defined boundaries.

3. Introduce Goal-Driven AI Agents

After automation maturity improves, deploy AI agents that can plan multi-step tasks, make decisions, and execute actions across systems. This is where enterprises move from AI assistance to AI-driven operations.

4. Scale to Multi-Agent Systems

Advanced organizations expand into multi-agent architectures where specialized agents collaborate across sales, operations, support, and analytics. This creates scalable, autonomous ecosystems rather than isolated AI tools.

AI Agents vs. LLMs in 2026: Final Takeaway for Enterprise Leaders

If we simplify the debate:

- A Large Language Model (LLM) predicts the next most likely word based on patterns and context. It generates intelligence in the form of text, code, or structured reasoning.

- An AI agent uses an LLM as its cognitive engine but adds memory, planning, decision-making, and execution layers to actually complete tasks across systems.

In short, LLMs generate responses, and AI agents deliver outcomes. Together, they form the foundation of modern autonomous enterprise intelligence.

For organizations evaluating AI strategy in 2026, the real question is not “LLM or AI agent?” The smarter approach is a hybrid AI architecture. Use LLMs for reasoning and knowledge tasks, and AI agents for workflow execution and cross-system automation. This approach reduces implementation risk, improves internal adoption, and ensures long-term scalability, making it the most sustainable enterprise AI strategy in 2026.

Companies that win in this decade will not simply deploy AI tools. They will design AI systems that think, act, and continuously improve.

At GraffersID, we help startups and enterprises build intelligent automation systems and scalable AI solutions aligned with business goals.

Hire expert AI developers to build production-ready AI systems.