Every week, engineering teams run dozens of technical interviews. Candidates clear coding assessments, confidently explain system architecture, and clear every round, only to join their first sprint and struggle to write a basic function without AI assistance.

This isn’t a rare case anymore. It’s becoming a pattern in 2026. AI has undeniably improved tech recruitment by enabling faster resume screening, scalable coding evaluations, and providing access to global talent pools. But the same advancements powering efficient hiring are also equipping candidates with smart tools to manipulate the process.

For CTOs, CEOs, and product leaders, this is no longer just a recruiter’s headache. It’s a strategic business risk that quietly shows up as technical debt, security gaps, missed sprint timelines, rising burn rate, and declining output.

So what does AI-assisted interview fraud actually look like in 2026? How can organizations detect it without turning the hiring process into an interrogation? And is there a framework that lets you trust the humans you’re bringing onto your team?

That’s exactly what this guide covers. We’ll break down the common cheating techniques candidates use, the detection tools worth considering, and a hiring approach that keeps both speed and integrity intact.

What is AI Interview Cheating in 2026?

AI interview cheating refers to the use of artificial intelligence tools by candidates to unfairly generate answers, solve coding problems, manipulate identity, or receive hidden real-time assistance during virtual technical interviews.

In 2026, AI-assisted cheating is more advanced, subtle, and harder to detect than traditional plagiarism.

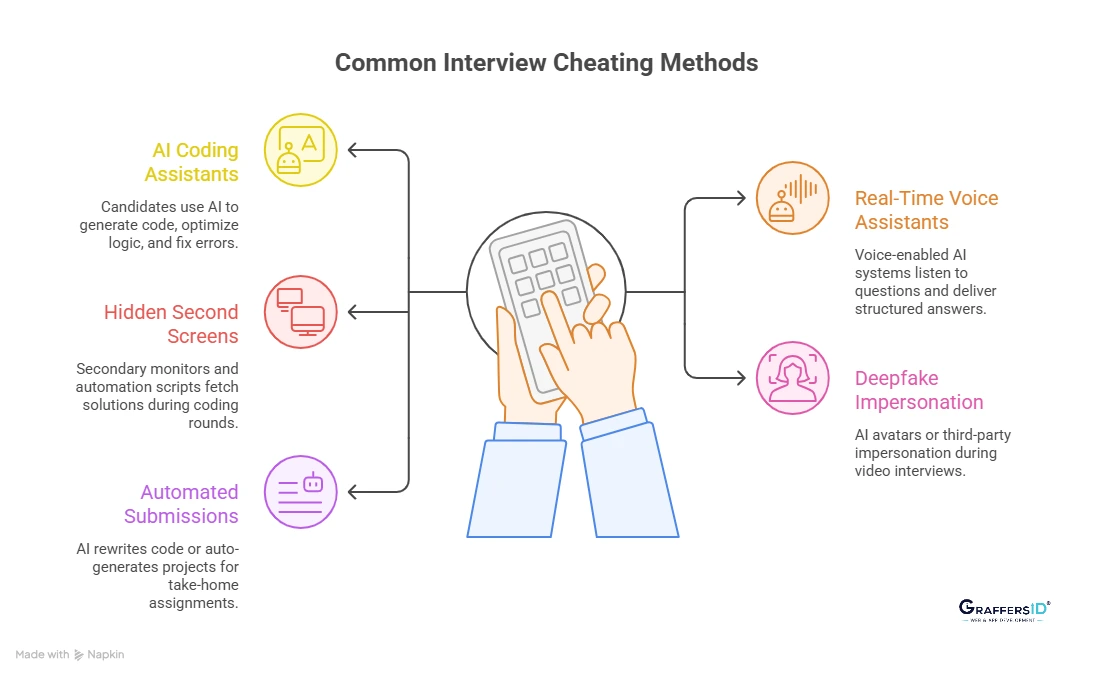

Common Ways Candidates Use AI to Cheat in Technical Interviews in 2026

Below are the most common forms organizations must understand to protect interview integrity and engineering quality:

1. AI Coding Assistants in Live Coding Tests

Candidates use advanced coding copilots like GitHub Copilot, OpenAI ChatGPT, and Amazon Web Services CodeWhisperer to instantly generate algorithms, optimize logic, and fix errors during assessments.

The code often runs successfully and passes test cases, but candidates may struggle to explain complexity analysis, architectural trade-offs, or production edge cases, revealing a gap between output and understanding.

2. Real-Time AI Voice Assistants During Interviews

Some candidates use voice-enabled AI systems that listen to interview questions and deliver structured answers through hidden earbuds or background devices.

These tools provide real-time conceptual explanations, system design guidance, and debugging steps, making responses sound polished while masking genuine skill level. Unlike copy-paste cheating, this method is conversational and requires behavioral detection, not just screen monitoring.

3. Hidden Second Screens and Browser Automation

Modern cheating setups often involve secondary monitors, virtual machines, browser extensions, or automation scripts that silently fetch solutions during coding rounds.

Because AI-generated responses are unique and rewritten in real time, traditional plagiarism detection tools may not flag them. This makes invisible digital assistance one of the most difficult forms of interview manipulation to identify.

Read More: AI Hiring: Transforming Talent Acquisition for Tech Teams in 2026

4. Deepfake and AI Avatar Impersonation

With improvements in real-time facial overlays and voice synchronization, candidates can use AI avatars or third-party impersonation during video interviews.

In some cases, a more experienced engineer provides answers off-camera while AI tools maintain visual consistency. As video AI becomes more realistic in 2026, identity verification has become a critical component of secure remote hiring.

5. AI Answer Suggestion Systems and Automated Submissions

For take-home assignments, candidates may pull solutions from public repositories, use AI to rewrite existing code, or auto-generate full projects tailored to the prompt.

Because modern AI rewrites produce unique variations, conventional plagiarism systems struggle to detect manipulation. The result is a polished submission that does not reflect the candidate’s true hands-on capability.

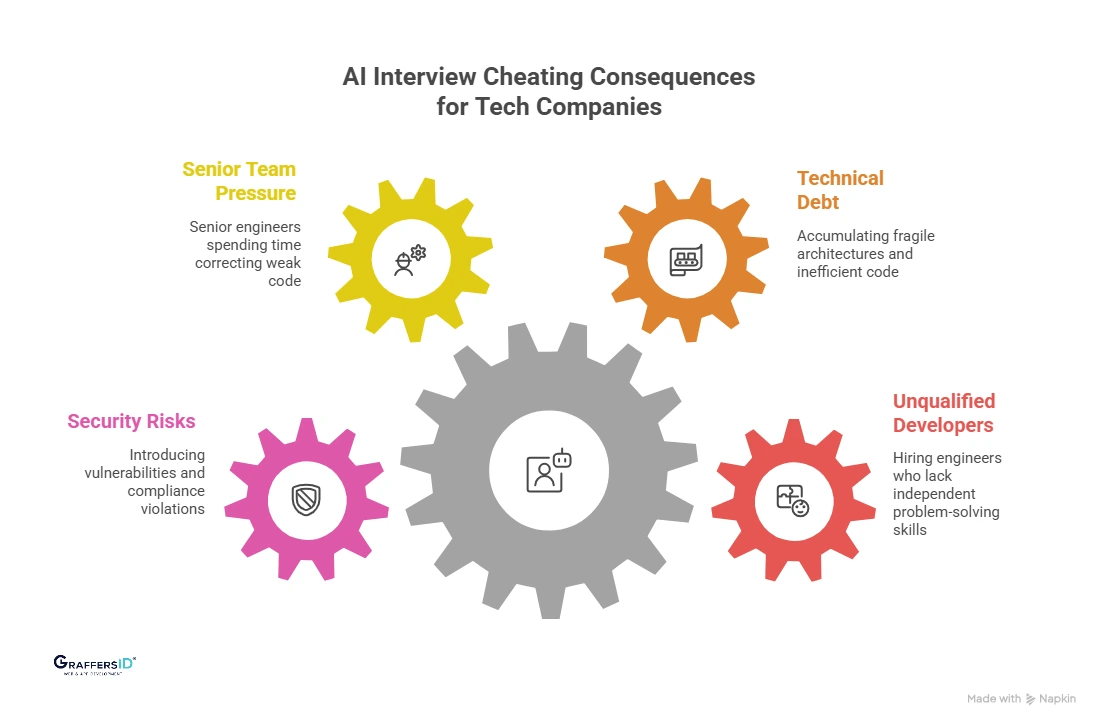

How AI Interview Cheating Impacts Tech Companies in 2026?

Below are the most common risks tech leaders face due to AI-assisted interview fraud in 2026:

1. Hiring Unqualified Developers

When candidates rely heavily on AI during interviews, companies may unknowingly hire engineers who lack independent problem-solving ability. These developers often struggle with debugging, handling production incidents, or making architectural decisions without AI guidance.

This becomes especially risky in high-stakes environments like cybersecurity, infrastructure engineering, AI product development, and financial systems, where real-time judgment is critical.

2. Rising Technical Debt & Poor Code Quality

Unqualified hires tend to produce fragile architectures, inefficient APIs, poorly structured databases, and code that is difficult to scale or maintain. While AI-generated solutions may look polished initially, they often lack deeper optimization and long-term design thinking.

Over time, this leads to mounting technical debt, increasing maintenance costs, slowing product velocity, and multiplying hiring investments several times over.

3. Increased Pressure on Senior Engineering Teams

When a compromised hire joins the team, senior developers are forced to spend additional time reviewing, correcting, and rewriting weak code. This drains high-value engineering bandwidth and delays critical roadmap milestones.

Instead of building new features or innovating, experienced engineers end up compensating for skill gaps, reducing overall team productivity.

4. Security, Compliance, and Data Risk Exposure

Developers who lack strong fundamentals may unintentionally introduce security vulnerabilities, misconfigure authentication systems, expose API keys, or mishandle sensitive data. In regulated industries such as fintech, healthcare, or SaaS, this can trigger compliance violations and financial penalties.

In 2026, when cyber threats are increasingly AI-driven, hiring underqualified technical talent significantly amplifies organizational risk.

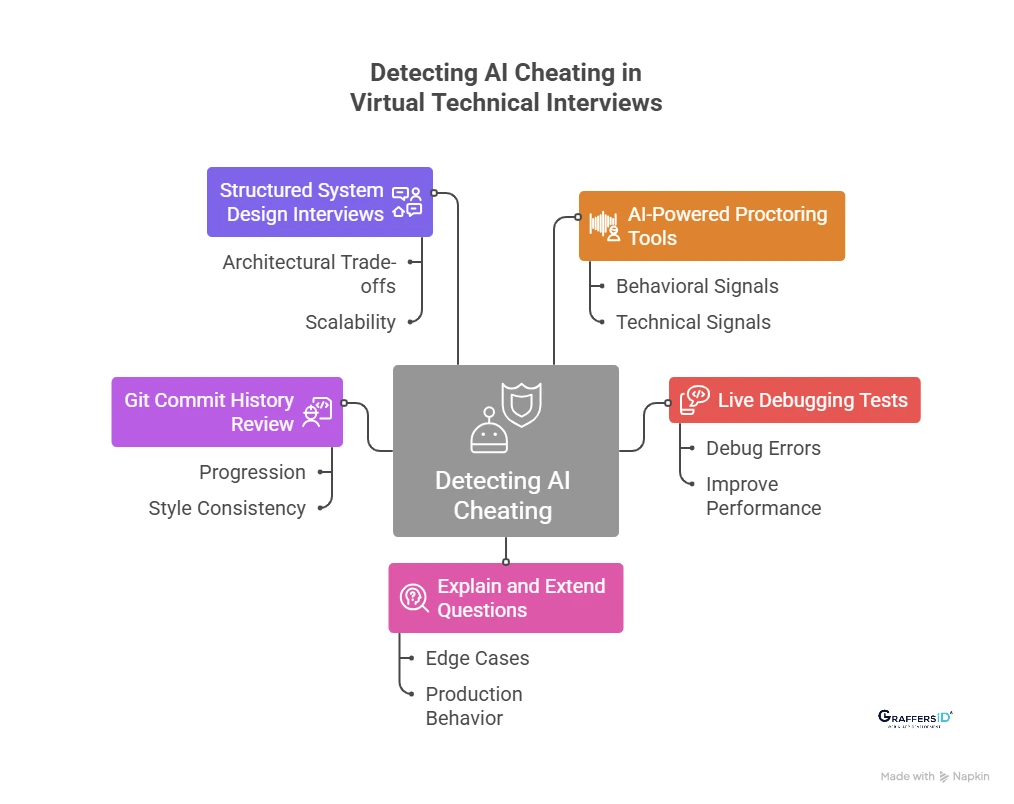

How to Detect AI Cheating in Virtual Technical Interviews? 2026 Guide

As AI tools become more advanced, companies need structured, evidence-based methods to detect AI-assisted cheating without slowing down hiring. Below are practical, 2026-ready strategies used by leading engineering teams:

Step 1: Use AI-Powered Interview Proctoring Tools

Modern proctoring platforms analyze behavioral and technical signals during live interviews. They can:

- Detect AI-generated code patterns and similarity anomalies

- Monitor tab switching, screen activity, and browser behavior

- Analyze typing speed, rhythm, and keystroke consistency

- Flag unusual eye movement and off-screen attention

- Identify voice modulation irregularities during responses

Popular Interview Monitoring Tools in 2026:

- HackerRank Proctoring

- CoderPad Live Interviews

- Mettl Secure Browser

- Copyleaks AI Detector

- Talview Behavioral Insights

These tools should support, not replace, human evaluation.

Step 2: Run Live Debugging and Code Modification Tests

Instead of asking candidates to write fresh code from scratch, give them broken or inefficient code and evaluate how they think. Ask them to:

- Debug errors in real time

- Improve performance bottlenecks

- Explain why the code fails

- Refactor logic for clarity and scalability

AI can generate solutions, but it struggles with spontaneous reasoning under live pressure.

Step 3: Apply the “Explain and Extend” Questioning Method

After a candidate solves a problem, test the depth of understanding by extending the scenario. For example:

- What happens if the input size increases 10x?

- How would this behave in production?

- What edge cases could break this logic?

- How would you redesign this for scale?

Superficial AI-generated answers often collapse under deeper follow-up questioning.

Read More: What Is an AI Appointment Scheduling Agent? Benefits, Use Cases, and Setup (2026)

Step 4: Review Git Commit History, Not Just Repositories

A polished GitHub repository is no longer enough to validate expertise. Look for:

- Natural progression in commit history

- Consistent coding style over time

- Meaningful issue discussions and pull requests

- Realistic contribution timelines

Authentic developer growth leaves behavioral traces that AI-generated projects often lack.

Step 5: Conduct Structured System Design Interviews

System design interviews remain one of the strongest defenses against AI-assisted cheating. Focus on:

- Architectural trade-offs

- Business constraints and scalability

- Failure handling and resilience planning

- Real-time design changes based on new requirements

AI tools can suggest frameworks, but they struggle with contextual decision-making and dynamic reasoning.

Top Tools to Detect AI-Based Interview Cheating in 2026

| Tool | Problem Solved | How It Works |

|---|---|---|

| Copyleaks AI Detector | AI-generated code/answers | Machine learning identifies AI outputs |

| HackerRank Proctoring | Screen-sharing/tab-switching | Webcam monitoring + plagiarism checks |

| Mettl Secure Exam Browser | External access during tests | Locks screen, disables shortcuts, tracks behavior |

| HirePro Live Interview | Deepfake or impersonation | Face & voice verification + live proctoring |

| Plagscan / Moss | Plagiarized or AI-submitted code | Compares submissions with online repositories |

| Xobin | AI/code-sharing during tests | AI flagging + webcam monitoring |

| Talview Behavioral Insights | Unnatural speech/typing | Analyzes tone, rhythm, and patterns |

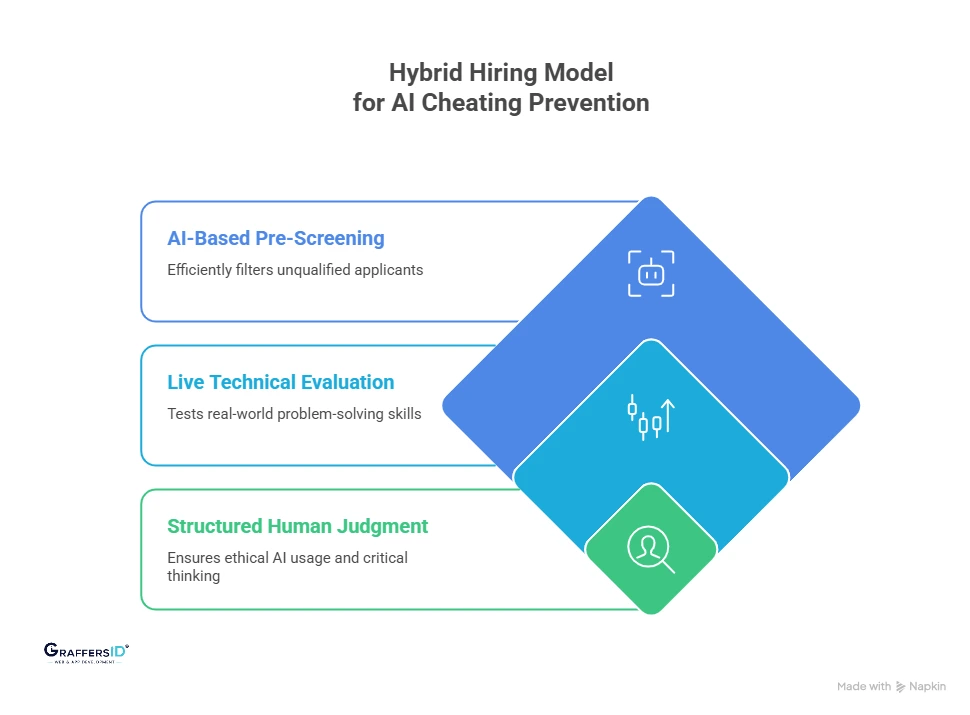

How to Prevent AI Cheating in Technical Interviews in 2026? (Hybrid Hiring Model for Secure Tech Recruitment)

In 2026, the smartest way to prevent AI-assisted interview cheating is not banning AI tools, it’s redesigning the hiring process. Below is a structured, multi-layered evaluation model that CTOs and hiring leaders can implement:

Layer 1: AI-Based Pre-Screening for Initial Filtering

Use automation to eliminate unqualified applicants efficiently before investing human interview time.

- Resume parsing & skill matching: Identify relevant experience, tech stack alignment, and role fit using AI screening tools.

- Foundational coding assessments: Run time-bound tests that measure basic syntax, logic, and structured thinking.

- Objective skill validation: Use standardized technical filters to benchmark candidates consistently.

This layer improves hiring efficiency without relying solely on self-reported skills.

Layer 2: Live Technical Evaluation to Test Real Ability

Real-time interaction is the strongest defense against AI-driven interview manipulation.

- Pair programming sessions: Assess how candidates think, communicate, and adapt while solving problems collaboratively.

- Live debugging tasks: Evaluate reasoning ability by asking candidates to identify and fix broken code.

- System design discussions: Test architectural thinking, scalability decisions, and trade-off analysis under real-world constraints.

Live evaluation exposes whether a candidate understands the logic behind the output, not just the output itself.

Layer 3: Structured Human Judgment for Final Validation

Technical performance alone is not enough in 2026. Independent thinking and ethical AI usage matter equally.

- Communication clarity: Can the candidate explain decisions without scripted answers?

- Critical thinking ability: Do they evaluate edge cases and anticipate failure scenarios?

- Cultural and ethical alignment: Do they demonstrate responsible AI usage and accountability?

This layer ensures you are hiring engineers who can operate independently in production environments.

Conclusion: How to Prevent AI Interview Cheating and Hire Reliable Developers in 2026

AI-powered virtual interviews are now standard in tech hiring. But as AI tools become more advanced, so do the risks of AI-assisted interview cheating. AI interview cheating does not just lead to a bad hire; it compounds into operational inefficiencies, security threats, and long-term financial loss.

The companies that win in 2026 will not be the ones that ban AI. They will be the ones who design hiring systems strong enough to test independent thinking in an AI-assisted world.

At GraffersID, our every developer goes through multi-layered technical screening, live evaluation rounds, and real-world skill validation, ensuring you hire engineers who can think independently, solve complex problems, and deliver production-ready systems.

Hire pre-vetted developers from GraffersID and ensure your projects are powered by top-tier talent.