Large Language Models transformed how software understands human language. LLM agents are transforming how software thinks, decides, and takes action.

In 2026, businesses are moving beyond chatbots that simply respond to prompts. Today’s leaders are looking for AI systems that can plan tasks, make decisions, use tools and APIs, and execute workflows autonomously across products, teams, and entire organizations.

This is where LLM agents come in.

From autonomous customer support and AI-powered development workflows to enterprise-grade automation and decision support, LLM agents are quickly becoming the backbone of AI-first products and operations.

In this guide, you’ll learn what LLM agents are, how they work, their core architecture, and the real business use cases driving adoption in 2026, so you can understand how organizations are using agentic AI to scale faster, operate smarter, and stay ahead of the competition.

What is an LLM Agent?

An LLM agent is an advanced AI system built on top of a Large Language Model that can understand objectives, make decisions, and take actions to complete tasks autonomously.

Unlike standard AI models that only generate responses, LLM agents are designed to operate as goal-driven systems. They can interpret intent, plan the steps required to achieve an outcome, interact with tools and APIs, and adjust their behavior based on results.

In practical terms, an LLM agent can:

-

Understand high-level goals, not just individual prompts

-

Plan and sequence actions to achieve those goals

-

Use tools, APIs, databases, and enterprise systems

-

Maintain short-term and long-term memory for context

-

Reflect on outcomes and refine future actions

-

Execute complex, multi-step workflows without constant human input

This shift allows AI to move from conversation-based interactions to real operational capability, making LLM agents suitable for production-grade business workflows across engineering, operations, customer support, and analytics.

Read More: Agentic AI vs. AI Agents: Key Differences, Real-World Examples, and Business Use Cases (2026 Guide)

Business Benefits of LLM Agents in 2026

Organizations adopting LLM agents gain:

-

Faster execution without scaling headcount

-

Always-on intelligent systems

-

Personalized customer experiences

-

Reduced operational costs

-

Improved decision velocity

LLM Agent vs. Traditional LLM

A traditional Large Language Model is designed to respond to prompts. It typically:

-

Generates text based on a prompt

-

Responds once per interaction

-

Has no awareness of goals or task completion

An LLM agent, on the other hand, is designed to achieve outcomes, not just produce responses. An LLM agent:

-

Understands objectives, not just prompts

-

Breaks goals into steps

-

Uses tools, APIs, and data sources

-

Remembers past context

-

Acts autonomously until the task is complete

In simple terms: A traditional LLM tells you what to do, while an LLM agent actually does it.

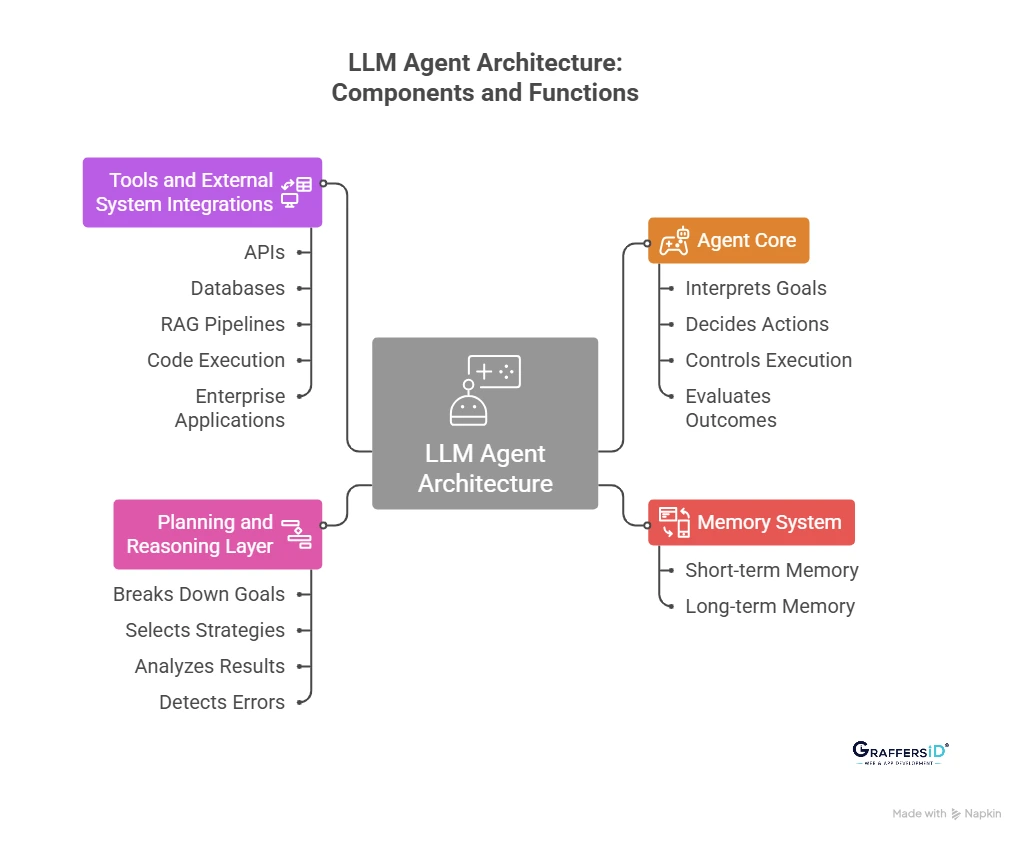

LLM Agent Architecture: How AI Agents Are Built in 2026?

Understanding how LLM agent architecture works is essential for building scalable, reliable, and production-ready AI systems. Below is a clear breakdown of the core components of an LLM agent architecture.

1. Agent Core (Decision-Making Engine)

The agent core is the central control unit of an LLM agent. It is responsible for turning user goals into actionable steps and managing the agent’s behavior throughout a task. The agent core typically:

-

Interprets high-level goals or instructions

-

Decides what action to take next

-

Controls the execution flow across multiple steps

-

Evaluates outcomes before proceeding further

In practice, the agent core acts as an orchestrator, continuously deciding what the agent should do next based on the current context, available memory, and results from previous actions. This is what enables LLM agents to move beyond single-response outputs and operate autonomously.

2. Memory System in LLM Agents

Memory allows LLM agents to function beyond one-time interactions and enables context-aware and personalized behavior. Most production-grade LLM agents use two types of memory:

-

Short-term memory: Stores the current task context, recent actions, and immediate conversation history.

-

Long-term memory: Retains user preferences, historical interactions, previous decisions, and structured enterprise data.

By combining short-term and long-term memory, LLM agents can maintain continuity across sessions, learn from past actions, and deliver more relevant, personalized responses, capabilities that are critical for enterprise use cases in 2026.

3. Planning and Reasoning Layer

The planning and reasoning layer enables LLM agents to handle complex, multi-step tasks rather than simple command execution. This layer allows agents to:

-

Break high-level goals into smaller, manageable subtasks

-

Select the most effective execution strategy

-

Analyze intermediate results and adjust actions dynamically

-

Detect errors and correct them before proceeding

Common reasoning patterns used in modern LLM agents include:

-

Step-by-step task planning

-

Reason–Act–Observe execution loops

-

Self-reflection and output validation mechanisms

These reasoning capabilities are what make LLM agents reliable for real-world workflows such as automation, decision support, and software development.

4. Tools and External System Integrations

LLM agents become truly powerful when they can interact with systems outside the language model itself. Modern LLM agents can securely use tools such as:

-

APIs and microservices

-

Databases, CRMs, and ERP systems

-

Retrieval-Augmented Generation (RAG) pipelines

-

Code execution and scripting environments

-

Internal enterprise applications and dashboards

By integrating with external tools and data sources, LLM agents can retrieve real-time information, execute actions, and complete end-to-end workflows, not just generate text. This capability is what makes them suitable for production-grade AI applications in 2026.

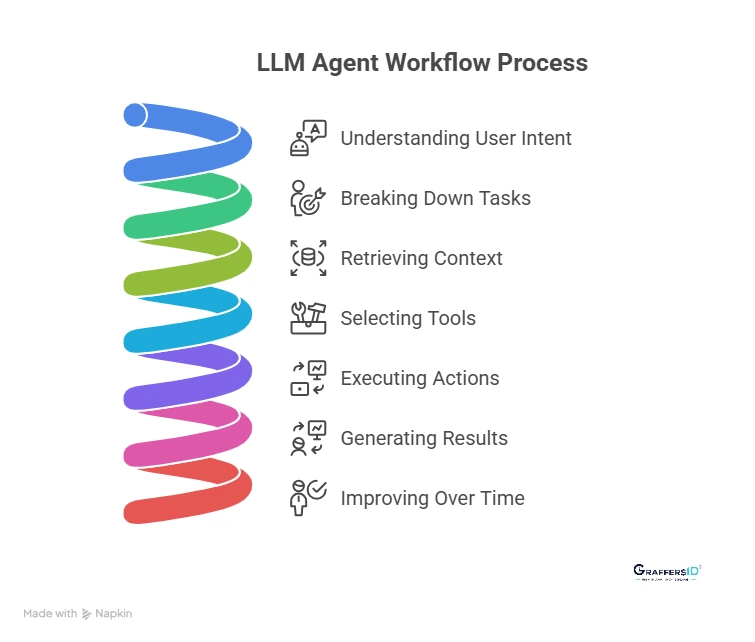

How LLM Agents Work in 2026: Step-by-Step Process

Step 1: Understanding User Intent and Goals

LLM agents first analyze natural language input to clearly understand what the user wants to achieve. They identify the primary objective, any constraints or limitations, and the criteria that define a successful outcome. This step ensures the agent focuses on goals, not just keywords.

Key elements identified:

-

User intent and desired result

-

Constraints such as time, data, or permissions

-

Success metrics for task completion

Step 2: Breaking Down Complex Tasks into Actions

Once the goal is clear, the agent breaks it into smaller, manageable steps that can be executed sequentially or in parallel. This task decomposition allows the agent to handle complex workflows instead of single-turn responses.

Common task components include:

-

Data collection or retrieval

-

Logical decision-making steps

-

Action execution and validation

Step 3: Retrieving Context and Knowledge Using Memory and RAG

To deliver accurate and grounded outputs, the agent retrieves relevant context from its memory and external knowledge sources. Retrieval-Augmented Generation (RAG) enables access to enterprise data, documents, and knowledge bases in real time.

Sources used for context:

-

Short-term and long-term memory

-

Internal enterprise systems

-

Knowledge bases and structured data

Step 4: Selecting and Using Tools to Take Action

LLM agents don’t just suggest actions, they execute them. Based on the task, the agent selects the most appropriate tools and interacts with external systems to complete the workflow.

Examples of tool usage:

-

Calling APIs and microservices

-

Querying databases or CRMs

-

Triggering automated business workflows

Step 5: Generating Results and Improving Over Time

After execution, the agent synthesizes results into a clear, user-ready output. It then evaluates the outcome, stores useful learnings, and refines future decisions, making the system smarter with continued use.

This step enables:

-

Outcome evaluation and validation

-

Memory updates for personalization

-

Continuous performance improvement

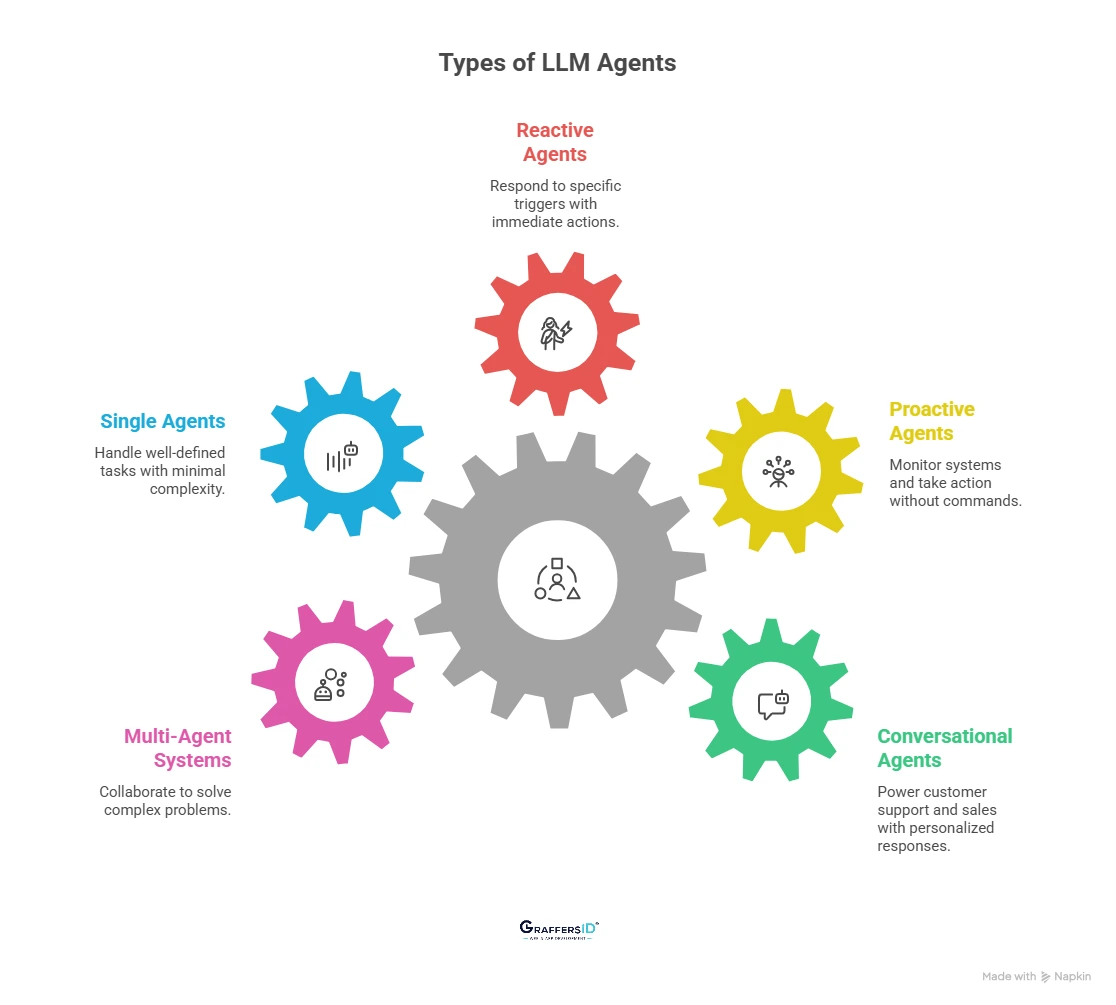

Common Types of LLM Agents Used in Real-World Applications in 2026

1. Conversational Agents

Conversational LLM agents power advanced customer support, sales, and internal helpdesk systems. Unlike traditional chatbots, they maintain context across conversations, access enterprise data, and deliver personalized, accurate responses in real time.

2. Proactive Agents

Proactive LLM agents monitor systems, data, or user behavior and take action without waiting for a command. In production environments, they are used for alerts, recommendations, anomaly detection, and workflow initiation, enabling faster and more intelligent decision-making.

3. Reactive Agents

Reactive LLM agents respond to specific triggers such as user actions, system events, or incoming requests. These agents are commonly used in support systems, compliance workflows, and real-time operations where immediate, context-aware responses are required.

4. Single Agents

Single-agent systems handle well-defined tasks with minimal complexity, such as answering internal queries or generating reports. They are easier to deploy and maintain, making them suitable for organizations starting with agent-based AI.

5. Multi-Agent Systems

Multi-agent LLM systems consist of multiple specialized agents working together to solve complex problems. Each agent handles a specific role, such as planning, execution, or validation, making this approach effective for enterprise-scale automation and decision support.

Read More: AI Agents vs. LLMs in 2026: Key Differences, Enterprise Use Cases & Which Should Enterprises Use?

Enterprise Use Cases of LLM Agents in 2026

- AI-Powered Customer Support Automation: LLM agents handle customer queries end-to-end by resolving tickets autonomously, retrieving data from CRMs and knowledge bases, and escalating complex issues only when human intervention is required.

- Autonomous Business Process Automation: LLM agents automate internal workflows such as employee onboarding, document processing, approvals, and compliance checks, reducing manual effort and operational delays across teams.

- AI Agents for Software Development Workflows: LLM agents assist engineering teams by generating and refactoring code, detecting bugs, running tests, and supporting CI/CD pipelines to accelerate development cycles.

- Sales and Marketing Automation: LLM agents qualify leads, personalize outreach at scale, update CRM systems automatically, and generate performance reports to improve conversion and revenue efficiency.

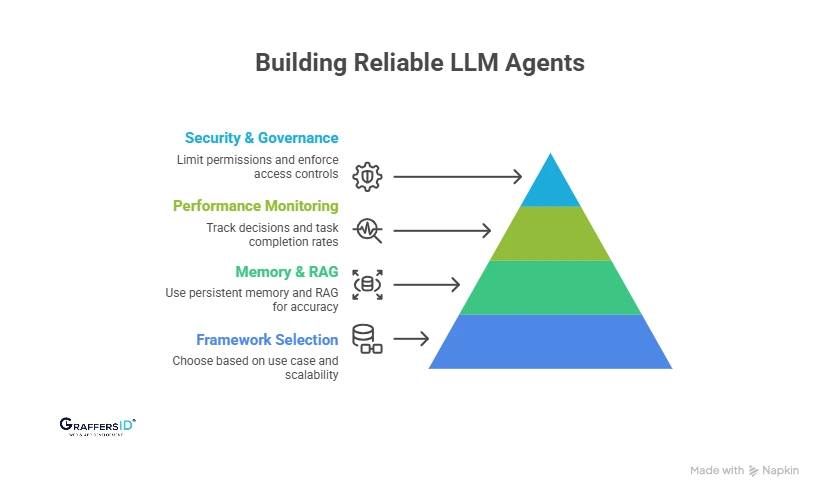

Best Practices to Build Reliable LLM Agents in 2026

1. Choosing the Right LLM Agent Framework

Selecting the right framework is critical for long-term success. Choose frameworks based on the complexity of your use case, required tool integrations, and how well they scale across teams, workloads, and enterprise systems in production environments.

2. Designing Memory and RAG for Accurate AI Agents

Effective LLM agents rely on well-structured memory and retrieval systems. Use persistent memory layers and Retrieval-Augmented Generation (RAG) to ground agent responses in enterprise data, reduce hallucinations, and maintain contextual accuracy over time.

3. Monitoring LLM Agent Performance and Reliability

Production-ready LLM agents must be observable. Track agent decisions, tool usage, and task completion rates to identify failures, improve accuracy, and ensure consistent performance as workflows grow more complex.

4. Securing LLM Agents with Governance and Access Control

Security is essential when agents can act autonomously. Limit tool permissions, enforce role-based access controls, and maintain audit logs to ensure compliance, prevent misuse, and build trust in agent-driven systems.

Conclusion: Why LLM Agents Power AI-First Businesses in 2026

LLM agents mark a fundamental shift in artificial intelligence, from systems that only respond to prompts to systems that plan, decide, and take action. They enable software to operate autonomously, interact with tools and data, and complete real business tasks end to end.

In 2026, competitive advantage doesn’t come from simply adopting AI. It comes from deploying agentic AI systems that automate workflows, assist teams, and scale intelligently across products and operations. Organizations investing in LLM agents today are laying the groundwork for:

-

Autonomous and self-operating software systems

-

AI-native products built for speed and adaptability

-

Scalable digital operations with lower manual effort

As enterprises move toward AI-first architectures, LLM agents are becoming a core layer, not an add-on to modern software strategy.

Ready to Build LLM Agents for Your Business?

At GraffersID, we help startups and enterprises hire dedicated AI developers to design, build, and scale AI-powered applications, LLM agent systems, and custom web and mobile platforms.

Contact GraffersID today to start building intelligent, agent-driven AI solutions that give your business a lasting edge.