Quick Takeaways

- Gartner predicts 40% of enterprise applications will include task-specific AI agents by the end of 2026, up from less than 5% in 2025. The shift from pilot to production is happening right now.

- The real CTO challenge is not deploying agentic AI tools. It is closing the governance gap that causes more than 40% of agent projects to fail before they reach production.

- Agentic AI is changing the skills your engineering team needs. Developers fluent in LLM orchestration and multi-agent frameworks are now a competitive advantage, not a nice-to-have.

- CTOs who treat agentic AI as a vendor selection decision rather than an architectural one will be dealing with compounding technical debt within the next 18 months.

Here is what the numbers actually say about agentic AI adoption in 2026: roughly 79% of enterprises have adopted AI agents in some form, but only around 11% are running them in production. That gap is not a technology problem. It is a leadership and governance problem. And if you are running an engineering team of any meaningful size, it is probably your problem too.

I have spent the last several months watching companies rush to deploy AI agents across their dev workflows, only to quietly shelve them six months later. The tools were not the issue. The missing piece was always a clear answer to: what exactly is this agent accountable for, and who owns it when it goes wrong?

This post is not about convincing you that agentic AI matters. You already know it does. It is about helping you make sharper decisions about how, where, and when to actually use it.

What Agentic AI Actually Is (and What It Is Not)

-

Beyond the Copilot Model

Most engineering teams have already worked with AI copilots. Tools like GitHub Copilot or similar code-completion assistants operate on a simple model: you prompt, the AI responds, you decide what to do next. The human stays in the loop for every decision.

Agentic AI works differently. Instead of waiting for your next instruction, an agentic system is given a goal and then plans, executes, and adapts across multiple steps to reach it. It can call tools, browse documentation, write and run tests, review its own output, and hand off to another specialized agent when needed. All without you managing each step.

The practical difference matters more than it sounds. A copilot helps one developer move faster. An agentic system can autonomously handle entire phases of a development workflow, testing, documentation, code review, incident triage, running in parallel, at a scale no human team can match.

If you want a deeper breakdown of how agentic AI differs from traditional AI agents, this guide on agentic AI vs AI agents covers the distinction well.

-

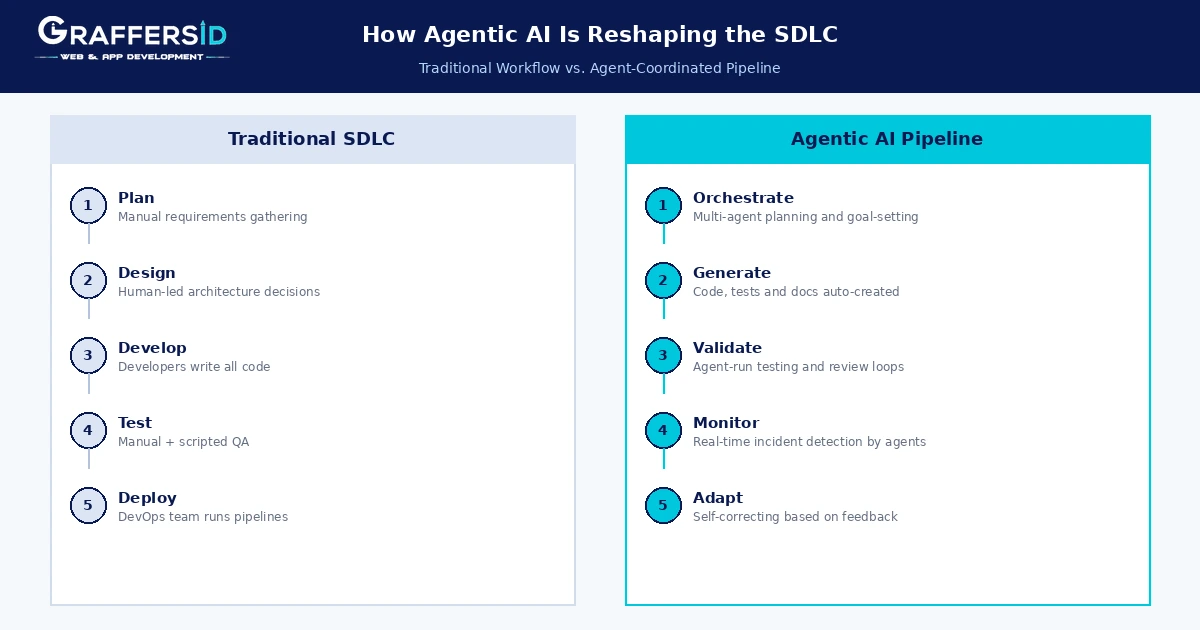

How Multi-Agent Orchestration Changes the SDLC

The shift that most engineering leaders underestimate is not the capability of a single agent. It is what happens when you deploy multiple specialized agents working together.

Think of it like this: one agent handles requirement parsing, another generates code stubs, a third runs test suites and flags failures, and a fourth monitors the deployed service for anomalies. Each has a specific function. An orchestration layer coordinates them and routes work based on output.

According to Anthropic’s 2026 Agentic Coding Trends Report, tasks that once required weeks of cross-team coordination can now become focused working sessions. The SDLC is not disappearing. It is being restructured around agent collaboration rather than human handoffs.

Read Also: Small vs. Large Language Models in 2026: Key Differences, Use Cases and Choosing the Right Model

Where Agentic AI Is Actually Moving the Needle

-

Coding Workflows and Real Productivity Numbers

The software development use case is where agentic AI is delivering the clearest, most measurable value right now. GitHub’s 2026 projections suggest that close to 89% of professional developers will be using AI coding assistants, with a significant majority saying they are essential to daily workflow, not optional.

Here is what that looks like in practice across different engineering functions:

| Engineering Function | Agentic AI Application | Measured Impact |

|---|---|---|

| Code generation | Agent drafts features from spec | 30% faster delivery cycles |

| Test coverage | Agent writes and runs test suites | Fewer production bugs |

| DevOps and CI/CD | Agent monitors and optimizes pipelines | Up to 47% faster deployments |

| Incident response | Agent detects and initiates resolution | Reduced mean time to recovery |

| Code review | Agent flags issues before human review | Junior code quality improvement |

The Anthropic Agentic Coding Trends Report also highlights something worth noting for workforce planning: businesses can now surge engineers on-demand onto tasks requiring deep codebase knowledge, then shift resources dynamically. Traditional staffing assumptions about team size and sprint capacity are starting to break down.

-

Beyond Code: DevOps and Incident Response

Agentic AI is also making real inroads in infrastructure management. Agents that scan deployment logs, detect anomaly patterns, and initiate rollback or alerting procedures without human intervention are already in production at a growing number of enterprise software development teams.

This is not experimental. Financial services and technology companies are leading adoption, with production deployment rates in tech already running well above the enterprise average.

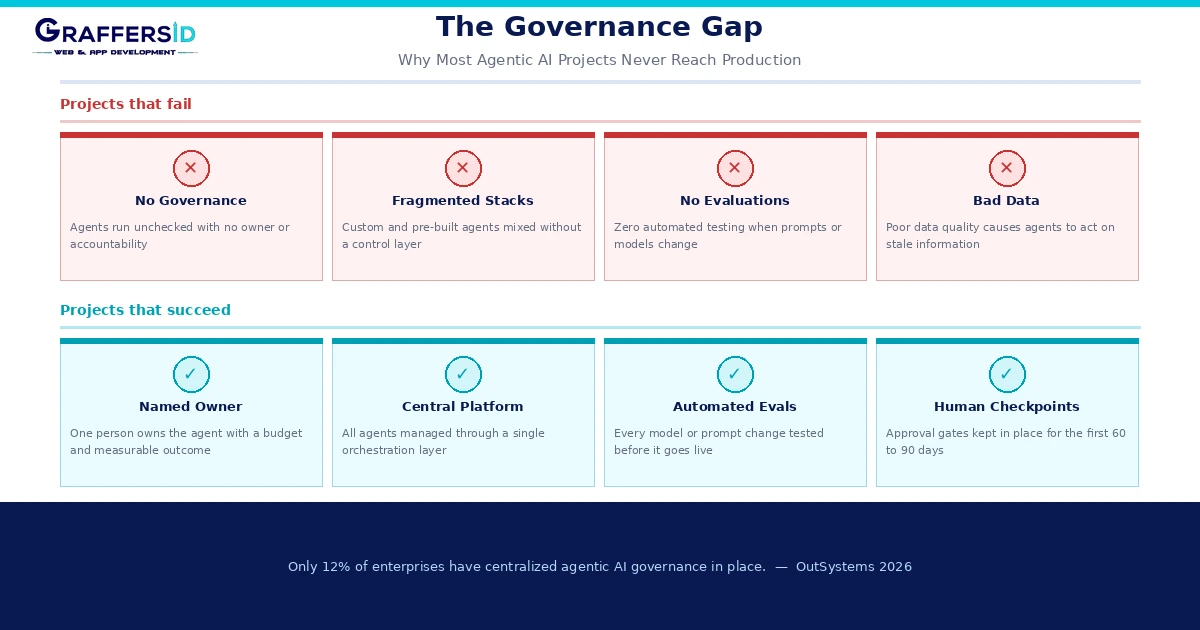

Why Are So Many Agentic AI Projects Failing?

This is the question most vendor conversations will not answer honestly. So here is what the data actually shows.

Gartner’s analysis puts the agent project failure rate above 40% by 2027. OutSystems surveyed 1,900 global IT leaders in their 2026 State of AI Development report and found that while 96% of organizations are using AI agents in some capacity, 94% report concern that AI sprawl is increasing complexity, technical debt, and security risk. Only 12% have implemented centralized governance.

That is not a surprising statistic. It is a predictable outcome when organizations treat agent deployment as a procurement exercise rather than an engineering discipline.

The Governance Gap

The organizations that are successfully running agents in production share a few consistent patterns. They have a named agent owner with budget authority and a measurable outcome. They run automated evaluations on every prompt or model change before deployment. They scope agents to a single workflow with clear success criteria, not open-ended assistant behavior. And for the first 60 to 90 days, they keep human-in-the-loop checkpoints in place.

The organizations that are failing are mostly doing the opposite: deploying agents across fragmented environments, mixing custom-built and pre-built systems without standardization, and measuring success with vague metrics like “developer productivity improvement.”

The Data Infrastructure Trap

Here is what most guides skip: agentic AI is only as reliable as the data it operates on. Around 52% of businesses cite data quality and availability as the biggest barrier to AI adoption, and that problem gets worse, not better, when you add autonomous agents to the equation.

An agent that writes code against a stale API schema, or triages incidents using outdated runbooks, does not fail loudly. It fails silently and confidently. Getting your data infrastructure right before scaling agents is not a prerequisite for pilots. It is a prerequisite for production.

Read Also: 5 Advantages and Challenges of Hiring Remote Developers

What Skills Does Your Engineering Team Actually Need?

-

New Roles and What to Look For

The skills gap around agentic AI is real, and it is moving faster than most hiring pipelines can keep up with. The roles that matter in 2026 are not entirely new disciplines. They are extensions of existing engineering roles, with a specific layer of agentic knowledge added.

Your existing senior engineers need to understand prompt engineering as a systems skill, not a chatbot trick. They need to know how to build and evaluate multi-agent workflows using frameworks like LangChain, LangGraph, CrewAI, or AutoGen. They need to understand how to instrument agent behavior with observability and evaluation tooling, because 94% of successful production deployments run automated evaluations on every change.

Beyond technical skills, you need engineers who can define what good agent behavior looks like and detect when it drifts. That combination, technical depth plus judgment about autonomy, is what separates engineers who can ship agentic systems from those who can only use them.

For teams that need to move quickly, hiring AI developers with hands-on experience building agentic workflows is often faster than upskilling an entire team from scratch, particularly when you are building your first production agent deployment.

IDC data suggests that around 40% of roles in Global 2000 companies will involve direct engagement with AI agents by 2026. That is not a future prediction. That is a hiring brief.

A Practical Decision Framework for CTOs in 2026

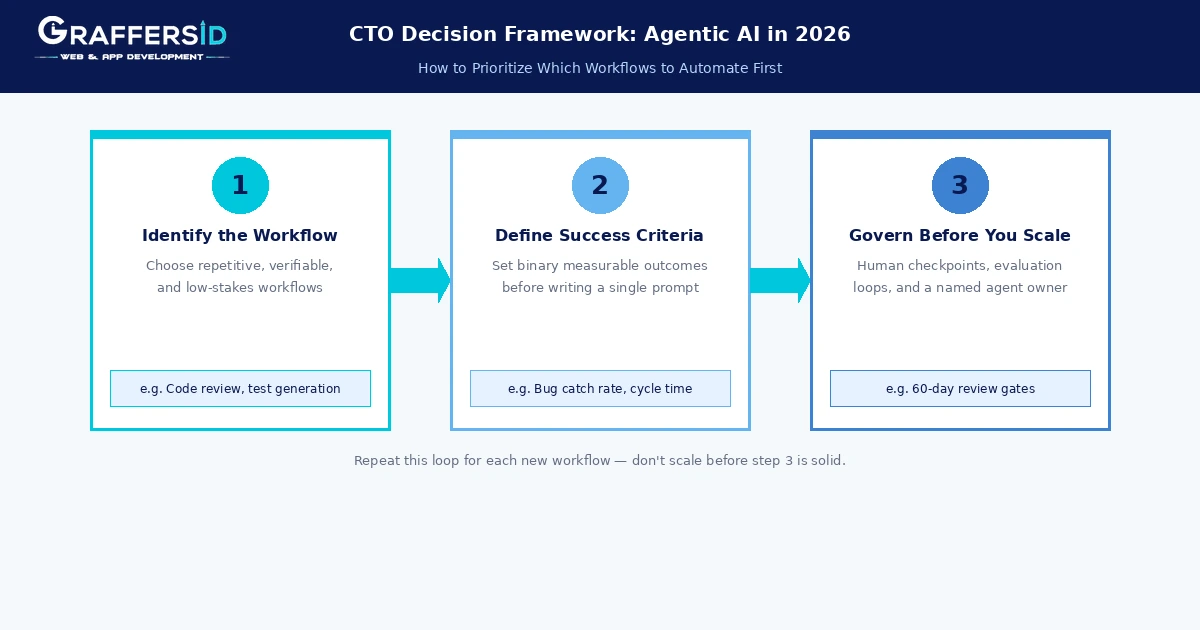

How to Prioritize Which Workflows to Agent-ify First

The single biggest mistake I see engineering leaders make is trying to build a general-purpose agent. Specific, scoped agents with binary success criteria outperform broad assistants almost every time.

Here is a simple three-step filter for deciding where to start:

- Identify workflows that are repetitive, verifiable, and low-risk to fail. Code review flagging, test generation, documentation drafting, and deployment monitoring are good starting points. The key question is: can you check the agent’s output without specialized knowledge?

- Define your success criteria before you write a single prompt. Not “improve developer productivity.” Something measurable: reduce PR review cycle time by 25%, catch 90% of failing tests before human review, cut incident triage time to under 10 minutes. If you cannot define it, you cannot govern it.

- Govern before you scale. Run with human-in-the-loop checkpoints for the first 60 to 90 days. Assign a named owner. Set up automated evaluations. Only once those are stable should you expand the agent’s scope or add new workflows.

The agentic AI market is projected to reach close to $11 billion in 2026 and grow at a compounding rate above 40% through the decade. The product development teams that are winning are not the ones who deployed the most agents. They are the ones who governed them well enough to keep them in production.

Where Does This Leave You?

Agentic AI in software development is not a question of whether anymore. It is a question of how disciplined your approach is. The gap between organizations experimenting with agents and those running them reliably in production is not a technology gap. It is a governance, infrastructure, and talent gap.

If your team does not have engineers who understand multi-agent orchestration and evaluation, that is the first thing to fix. If your data infrastructure is not clean enough to trust an autonomous system’s decisions, that is the second. And if you do not have clear ownership and measurable success criteria for each agent you deploy, everything else is just expensive experimentation.

At GraffersID, we work with engineering teams that need to move fast on AI without the risk of building on a fragile foundation. Whether you need to hire remote developers who are already fluent in agentic frameworks, or you need to staff up quickly for a specific deployment, we can help you find engineers who are genuinely production-ready.

Ready to build agentic AI capabilities into your engineering team without starting from scratch? GraffersID connects you with pre-vetted AI developers experienced in LLM orchestration, multi-agent frameworks, and production-grade deployments.