QUICK TAKEAWAYS

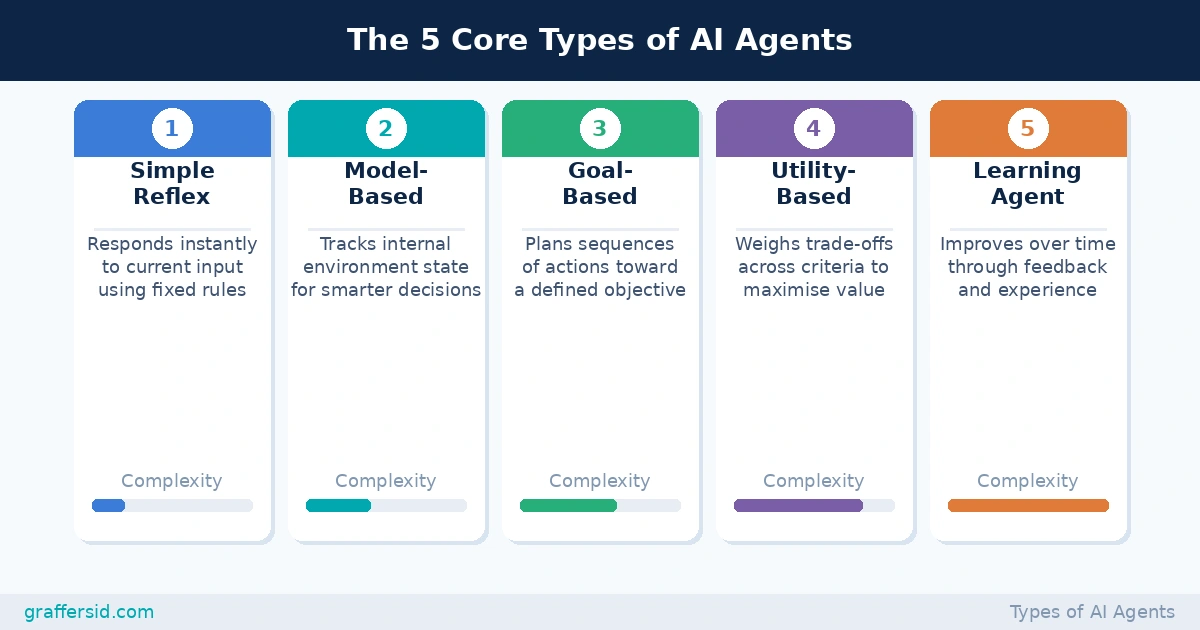

- There are five core types of AI agents — from simple reflex agents that follow rules, to learning agents that adapt over time — and each serves a fundamentally different business need.

- The global AI agents market reached $10.91 billion in 2026, with 51% of enterprises already running agents in production.

- Multi-agent systems are the fastest-growing architecture, with adoption projected to surge 67% by 2027.

- Choosing the wrong agent type for your use case is one of the most common reasons AI projects stall, exceed budget, or fail governance review.

Every CTO I talk to right now has the same problem. Their board has signed off on AI agents. Their engineering team has started experimenting. And somewhere between the proof of concept and the production roadmap, someone realises that “AI agent” isn’t one thing. It’s a category. And picking the wrong type is an expensive mistake.

The global AI agents market hit $10.91 billion in 2026, with Gartner forecasting that 40% of enterprise applications will embed task-specific agents by the end of this year, up from under 5% in 2025. The momentum is real. But so is the failure rate: over 40% of agentic AI projects are at risk of cancellation by 2027 if governance and ROI clarity aren’t established early.

Understanding the types of AI agents isn’t an academic exercise. It’s the first decision in a chain that determines your architecture, your engineering cost, and your eventual ROI.

What Makes an AI Agent Different from a Regular AI Model

Before the taxonomy, a quick framing note. A standard large language model responds to a prompt. It takes input, produces output, and stops. An AI agent doesn’t stop there. It perceives its environment, makes a decision, and takes action — sometimes repeatedly, in a loop, until a goal is met.

To understand what an LLM agent actually does at an architectural level, it helps to think in terms of three core components: perception (what data does it receive), reasoning (how does it decide what to do next), and action (what does it execute in the real world). The type of agent you deploy determines how sophisticated each of those three layers is — and what trade-offs you’re making.

The 5 Core Types of AI Agents

The foundational taxonomy for AI agents comes from classical AI research, but it maps cleanly onto the systems being built and deployed today.

A categorisation of the five main agent types by function and complexity, from simple reflex to learning agents.

Simple Reflex Agents –

Simple Reflex Agents operate entirely on condition-action rules. They look at the current input, match it against a predefined rule set, and execute the associated response. There’s no memory, no history, no planning. A spam filter, a temperature alert, a fraud flag based on a single transaction threshold — these are all simple reflex agents in practice. They’re fast, predictable, cheap to build, and brittle in any environment that changes.

Model-Based Reflex Agents-

Model-Based Reflex Agents take one step further. They maintain an internal representation of the world, which means they can handle inputs they can’t directly observe right now by reasoning about what the environment probably looks like. A customer support bot that remembers what was said three messages ago is relying on a model-based architecture. The model isn’t learning — but it’s tracking.

Goal-Based Agents-

Goal-Based Agents shift from reactive to proactive. Instead of just responding to stimuli, they evaluate possible actions against a defined objective and pick the one most likely to reach it. A logistics agent optimising delivery routes doesn’t just react to traffic — it works backward from a destination and plans a sequence of steps. This is where you start seeing genuine planning capability.

Utility-Based Agents-

Utility-Based Agents add another layer: instead of pursuing a binary goal (reached or not reached), they weigh trade-offs. Multiple criteria — cost, speed, risk, customer satisfaction — are scored and the agent selects the action with the highest expected utility. Financial portfolio agents, dynamic pricing systems, and resource allocation tools typically fall into this category. The challenge is that designing accurate utility functions is genuinely hard.

Learning Agents-

Learning Agents are the most adaptive of the five. They don’t just act on the current state — they improve. Using reinforcement learning, supervised learning, or continuous feedback loops, they update their behaviour over time. Netflix’s recommendation engine, fraud detection systems that evolve with new attack patterns, predictive maintenance models — these are all learning agents at work. The power is real. So is the governance overhead: a learning agent that’s poorly monitored can drift in ways that create regulatory or business risk.

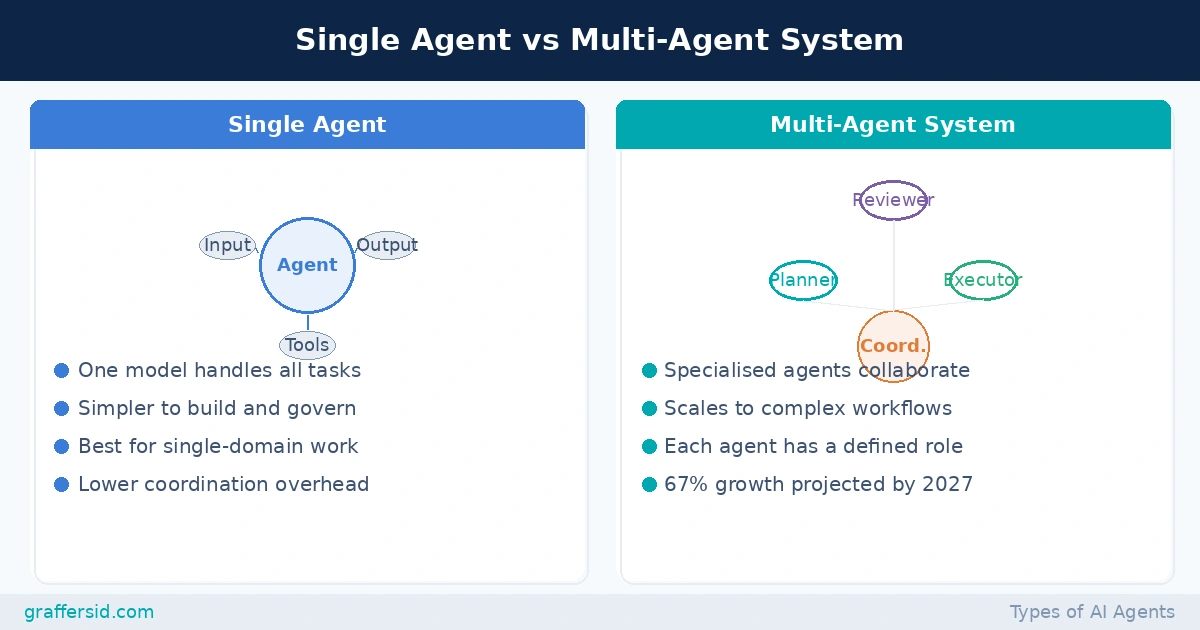

The five types above describe individual agents. But most of the interesting enterprise work happening in 2026 involves multiple agents working together.

Read Also: What is AnythingLLM? A 2026 Guide for CTOs to Build Secure Custom AI Agents

Single Agent vs Multi-Agent System

A direct comparison of single-agent setups and multi-agent systems, highlighting when each architecture is the better fit.

A multi-agent system assigns specialised roles to individual agents — a planner, a researcher, an executor, a reviewer — and coordinates them toward a shared objective. Think of it as the difference between one generalist doing everything and a well-run team with clear job descriptions. Workflow automation is already the top use case in 64% of agent deployments, and multi-agent adoption is projected to surge 67% by 2027 as enterprises stitch agents together across systems.

ReAct agents sit at the intersection of several types above. They combine reasoning and action in a continuous loop: think through the problem, take an action, observe the outcome, update the plan, repeat. Understanding how ReAct agents work is useful for any team evaluating LLM-native architectures, because this pattern is increasingly the backbone of production-grade agentic systems.

Hybrid architectures, meanwhile, are exactly what they sound like. They layer reactive speed at the bottom (for immediate responses) with deliberative reasoning at the top (for strategic planning). An onboarding agent that instantly flags a missing document while also tracking overall progress against compliance requirements is a hybrid in practice, even if no one called it that during the design phase.

For teams starting to explore what’s available, spending time with the emerging AI tools worth knowing in 2026 gives a useful picture of the frameworks — CrewAI, LangGraph, AutoGen, and others — that are being used to build these systems in production.

Which Type of AI Agent Does Your Business Actually Need?

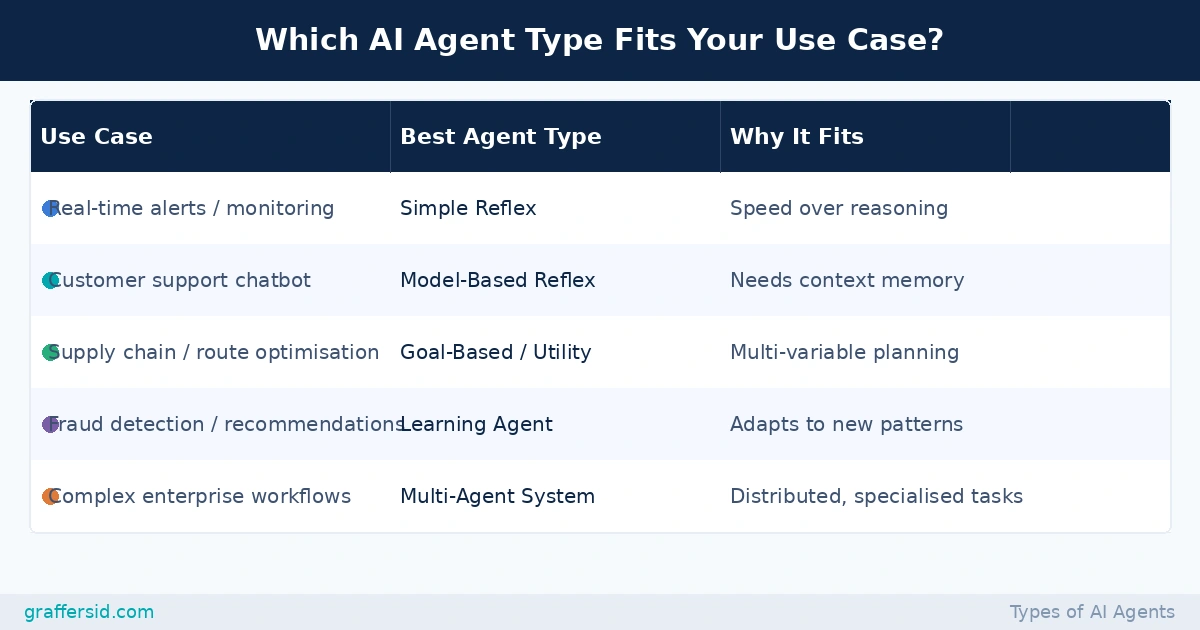

This is where most conversations should start, but usually don’t. Here’s a practical decision framework.

- If your task is high-volume, low-variation, and timing-sensitive — think real-time alerting, transaction flagging, or automated routing — a simple reflex or model-based agent is probably the right fit. Adding reasoning overhead to a system that doesn’t need it just introduces latency and failure modes.

- If your task requires planning across multiple steps — supply chain optimisation, multi-stage customer journey automation, resource scheduling — you’re in goal-based or utility-based territory. The key question here is whether you can define success precisely. If you can’t specify what “good” looks like, a goal-based agent will struggle.

- If your environment changes significantly over time — new fraud patterns, shifting customer behaviour, evolving market conditions — a learning agent earns its complexity. But it needs continuous data, ongoing retraining infrastructure, and governance processes to catch drift before it becomes a problem.

- If you’re building workflows that span multiple departments, tools, or decision types — enterprise automation, cross-functional data pipelines, product development support — a multi-agent system is likely where you’re headed. The coordination layer is real engineering work. It’s worth reading through how to build an AI agent at a production level before committing to this architecture, because the tooling and governance requirements are meaningfully different from a single-agent deployment.

The data from IBM positions this framework clearly. According to IBM, all five core agent types can work together within a multi-agent system, with each agent specialising in the part of the task it’s best suited for. The practical implication is that you’re rarely choosing just one — you’re designing a composition.

Read Also: Hire AI Developers

Which AI Agent Type Is Right for Your Use Case?

A decision table mapping common enterprise use cases to the most appropriate AI agent type, with rationale.

Picking the Type Is Step One

Choosing between agent types isn’t the hardest part of building with AI. Executing reliably — with the right architecture, memory design, tool integration, and governance layer — is where most teams hit friction.

The companies making the most progress in 2026 aren’t necessarily the ones with the largest AI budgets. They’re the ones that matched the right agent type to the right problem, built it properly the first time, and had the engineering talent to iterate fast when the environment changed.

If you’re at the stage of deciding what to build and who should build it, GraffersID works with startups and enterprises to staff experienced AI developers who understand agentic architectures, not just LLM wrappers. Get in touch to talk through your use case.